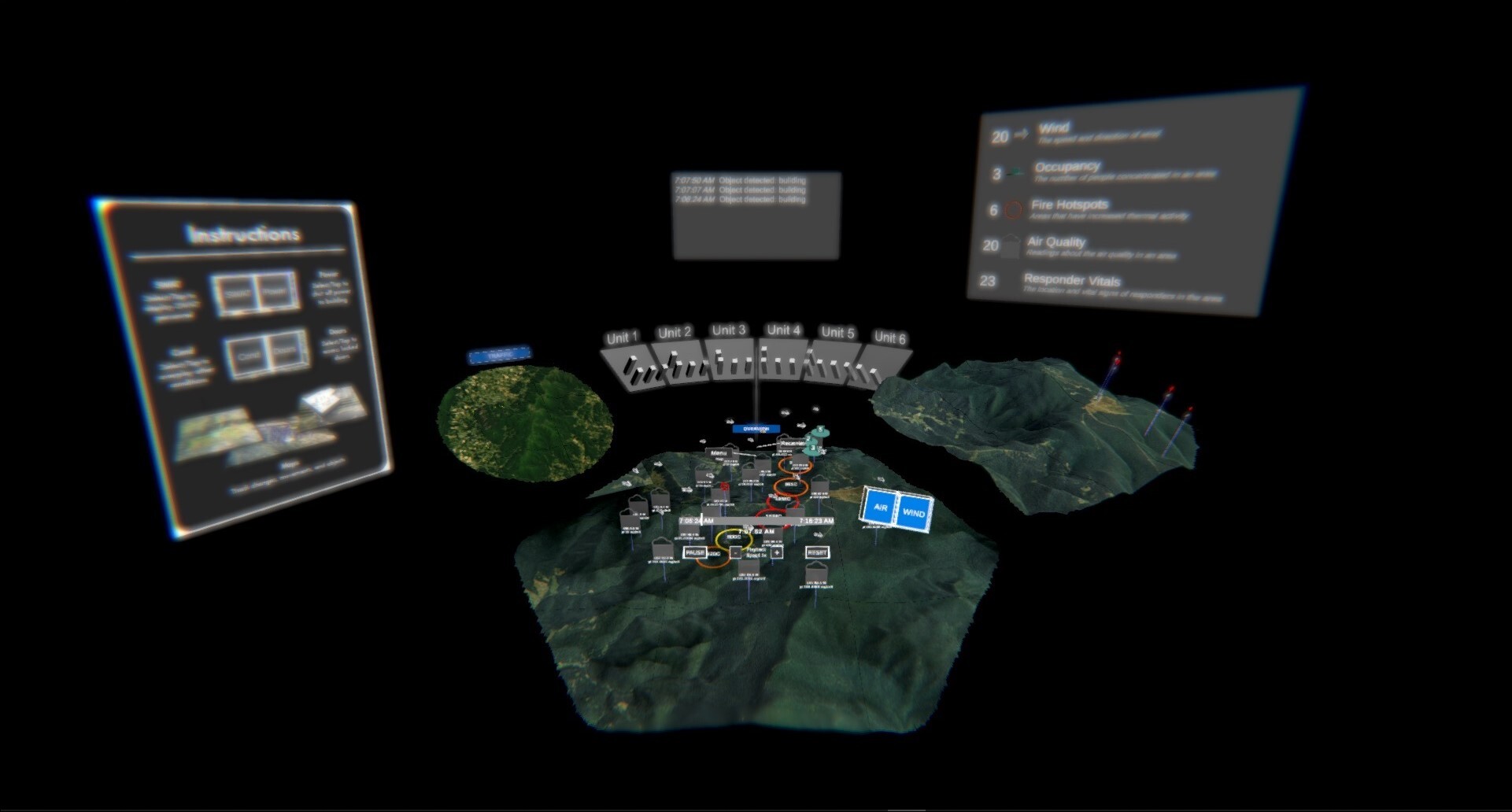

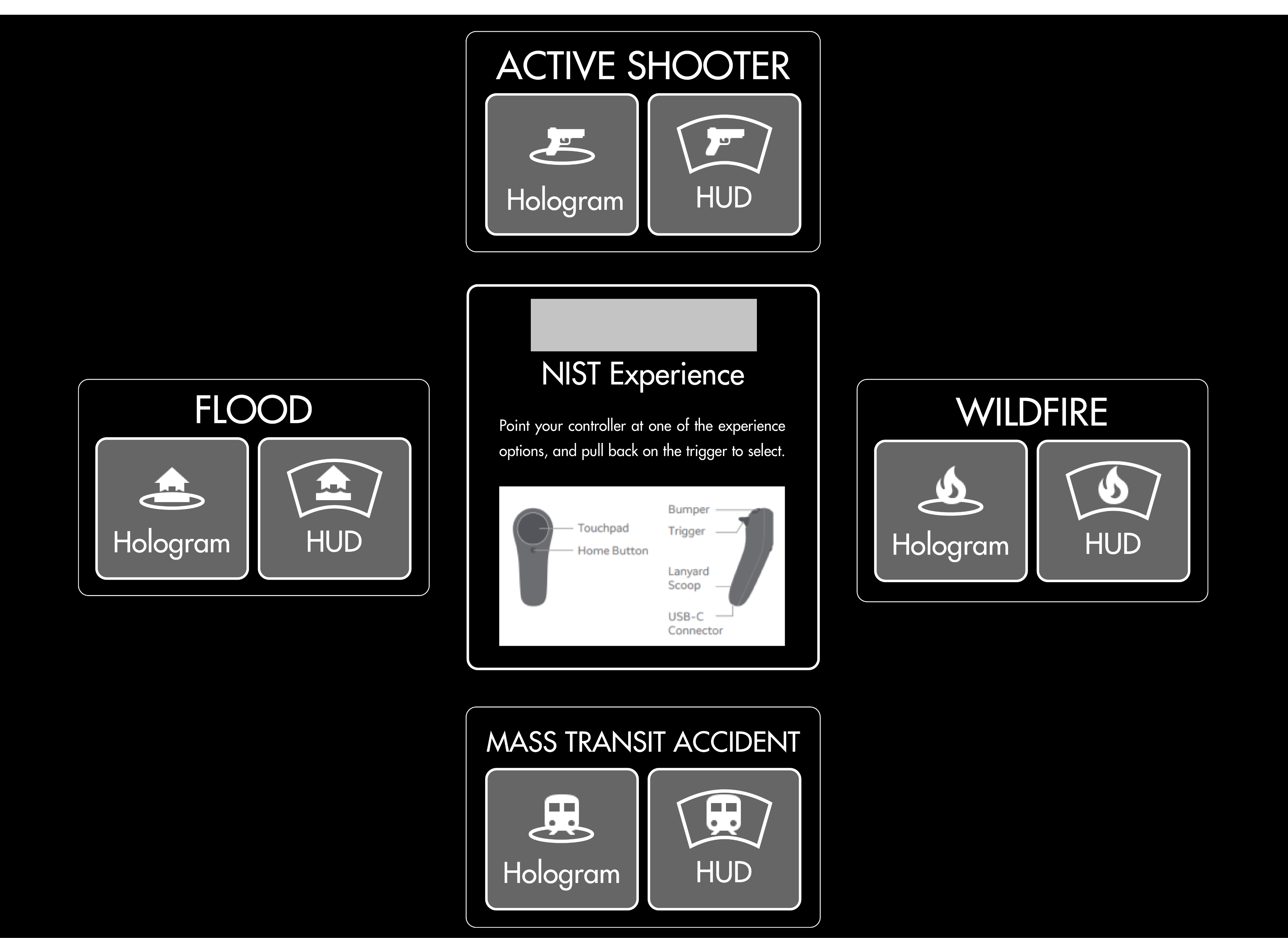

User Research and Competitive AnalysisWho are our users, and what are their pain points?In order to understand first responders, we had to get more information about them. First, we were able to run a competitive analysis on current systems via NIST's seminars, which can be seen below:

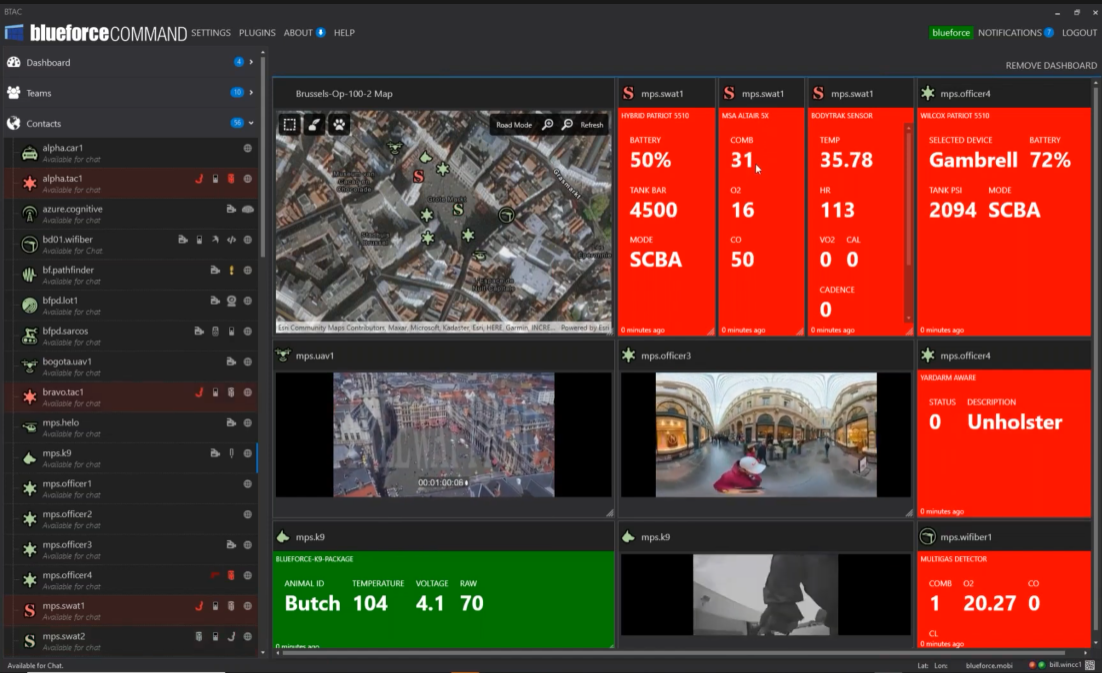

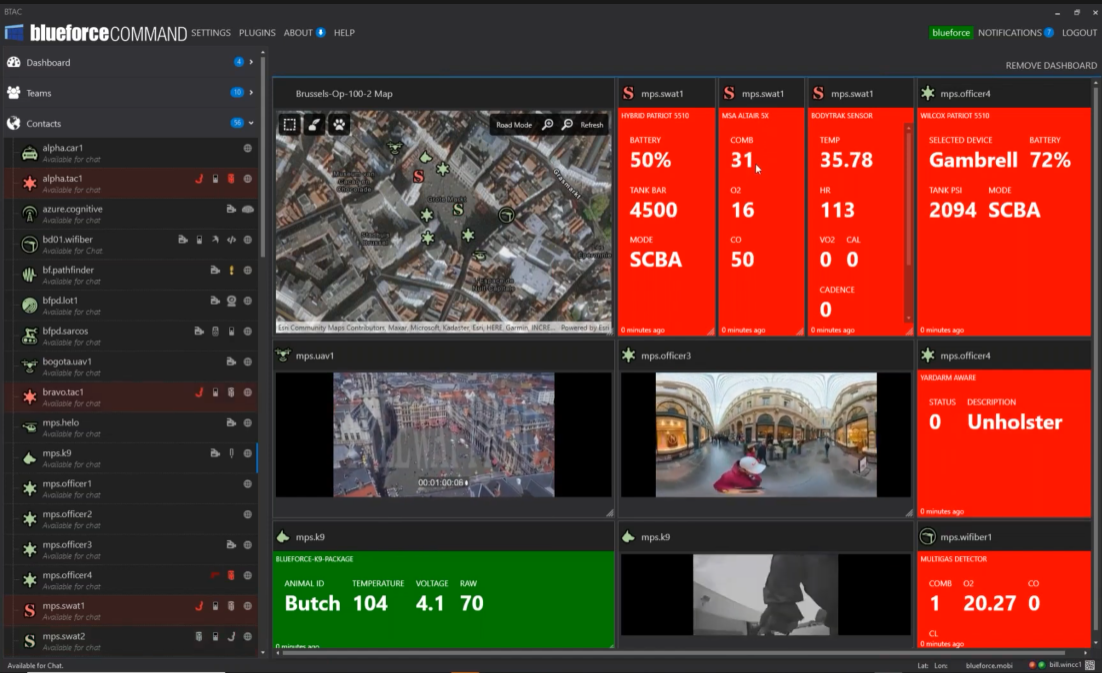

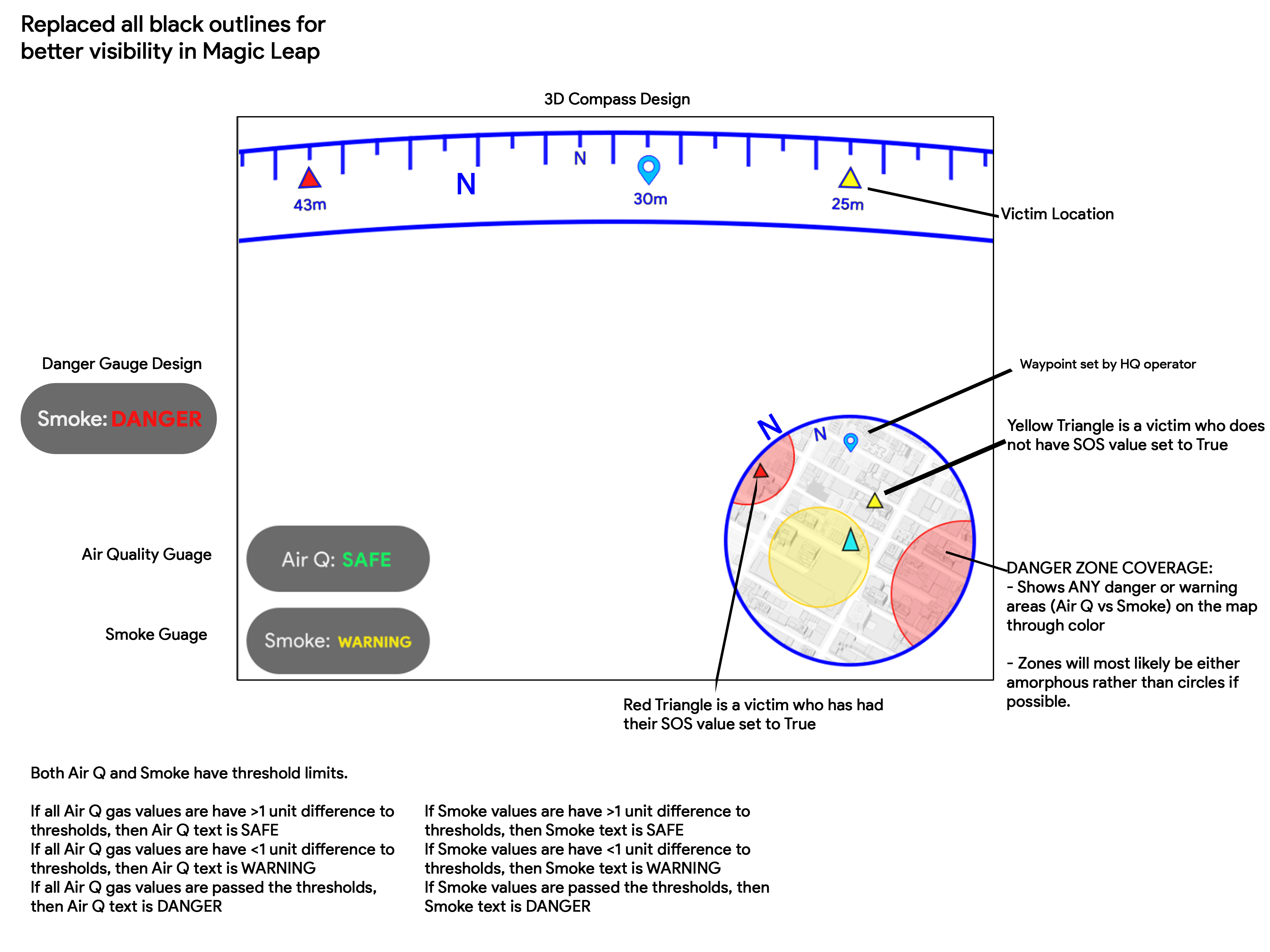

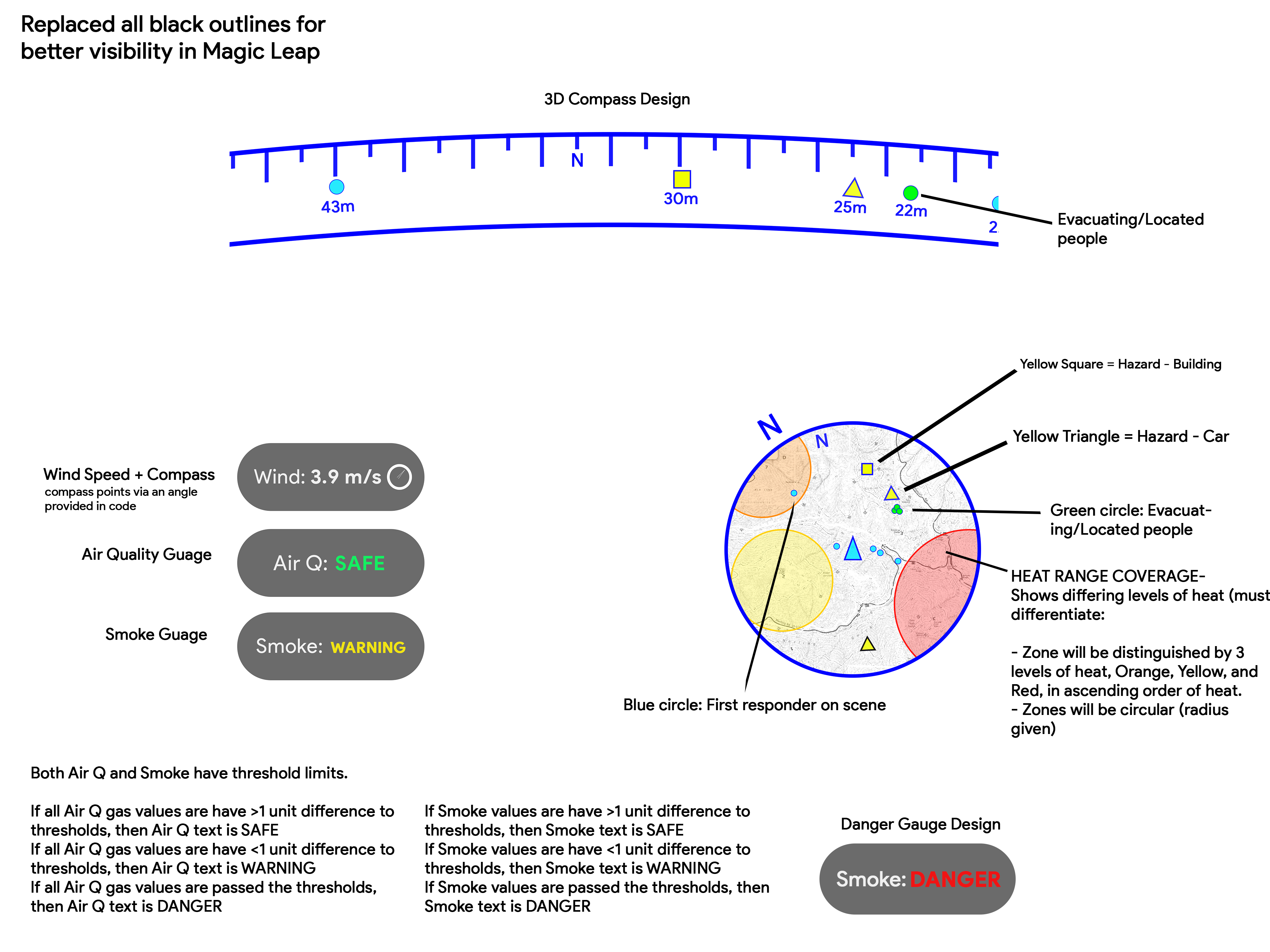

The current solution uses a flat, dashboard-style layout with widgets for body monitors, camera feeds, and GPS. Through interviews with fire chiefs and firefighters, we gathered insights on their roles, goals, and challenges. Combining these findings with our competitive analysis, we identified key takeaways:

Pain Point #1

Current systems are crowded and limiting

These dashboard have an overwhelming amount of information. Not being able to follow multiple personnel simultaneously was also a limitation we found.

Finding: Our solution needs to be able to show multiple datasets at the same time.

Pain Point #2

Lack of correlation between datasets

Datasets are displayed arbitrarily. There is no direct correlation that linked these sets together.

Finding: Our process needs to include ways to display all relevant information about personnel in question.

Pain Point #3

Responders struggle with location datasets

First responders in the field would greatly benefit from knowing where they are in relation to points of interest (victims, hazards, people of interest).

Finding: HUD system should include ways to ingest GPS data and display in usable way.

%20V1.svg)

.png)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)